TL;DR

- Solana's historical data has been expensive, slow, and bottlenecked by Bigtable since genesis

- Hydrant is Triton's new Rust backend that ingests the full Solana ledger into ClickHouse and serves it via high-performance JSON-RPC

- Three methods already live on Triton's infrastructure (getTransaction, getSignaturesForAddress, getSignatureStatuses), with more on the way

- Tail latency improved across gSFA and getSignatureStatuses, with recent data served in under 1 ms from an in-memory cache

- All historical queries priced the same: $0.08/GB bandwidth + $10/M requests, regardless of epoch

- Soon releasing open-source under AGPL license as an open standard for a complete ledger pipeline

Introduction

If you're building a wallet, an explorer, or a tax compliance tool on Solana, you know that history is messy, engineering-heavy, and full of custom logic.

If you know Triton, you know we're on a mission to solve developer problems.

On the real-time side, we've already shipped the building blocks most teams need: Dragon's Mouth (Yellowstone gRPC), Whirligig for faster and more reliable WebSockets, and Fumarole for persistent, data-complete streaming. For transaction sending, there's Jet for direct TPU sends and Shield for MEV protection.

But historical data remained a heavy lift, whether you're a developer running backfills, a founder building analytics, or an end user who just wants their dashboard to load.

What makes Solana history hard

Solana runs one of the largest ledgers in crypto: 400+ TB of history, 400+ billion transactions, and trillions of rows of on-chain data. Querying that much data at speed is as much an infrastructure problem as it is a big data problem.

Most providers rely on Bigtable as a historical backend, but it was never designed for the access patterns Solana demands: it's near-impossible to adapt, extremely slow for random access, and has seen few improvements since genesis. Every provider relying on it inherits those limitations, and so do their customers.

For users, this manifests as UI lag. You scroll down in your wallet, the spinner appears, and you wait. The deeper you go into the history, the slower it gets. Reports that pull weeks of data can take minutes to generate.

After years of building open history infrastructure for Solana, we realised that access could be dramatically more performant, even at this scale. But it couldn't be patched in. It needed a ground-up rebuild, and that's how Hydrant was started.

What is Hydrant?

We moved off Bigtable entirely and onto ClickHouse, a columnar database designed for the workloads Solana history demands: massive immutable datasets, predictable access patterns, and reads across billions of rows.

The result is Hydrant: a new Rust backend that ingests the full ledger into ClickHouse and exposes it as spec-compliant JSON-RPC, faster, more efficiently, and more affordably. When it's ready, we're releasing the full codebase under AGPL so anyone can run, audit, or extend it.

Every layer inside is optimised for how developers query history:

- Sorted columnar storage: every ClickHouse table is physically sorted for the queries it serves, so reads skip irrelevant data entirely

- Purpose-built materialised views: when data lands, ClickHouse automatically creates pre-sorted copies tailored to specific access patterns, so lookups like "all signatures for this address" are fast by design

- Head cache for recent data: the most recent slots live in memory, so queries for the last few minutes resolve in under 1 ms without a database round-trip, and processed commitment becomes available for historical methods

- Hot address optimisation: heavily queried addresses like USDC get their own dedicated lookup tables, keeping high-traffic queries fast without slowing down everything else

- Response-level observability: every response carries headers showing which backend served it (ClickHouse, the head cache, or both) and how much work it did (rows read, rows returned, bytes processed). If you're debugging performance, the data is right there in the response

What's live today

Three methods are served from Hydrant on Triton's infrastructure now:

- getSignaturesForAddress (tail latency 1.75x faster at P90)

- getSignatureStatuses (1.6x faster at P50)

- getTransaction (benchmarks coming soon)

We're bringing the remaining historical methods over from Old Faithful as we tune each one for the new backend.

What this means for you

- Wallets and portfolio trackers: transaction history loads in milliseconds, even months deep. You get a scroll without the spinner

- Explorers and analytics dashboards: transaction lookups return fast enough to power live interfaces without caching hacks

- Tax compliance and audit tools: reports that used to take minutes now generate in seconds

- End users: everything from last week and older loads faster. The noticeable lag on historical data disappears

- Infrastructure providers: when Hydrant goes open-source, you get a production-ready historical backend to deploy and build on without reinventing the foundation

- Triton customers: the methods you already use have just become faster and cheaper (no changes needed on your side)

How it works under the hood

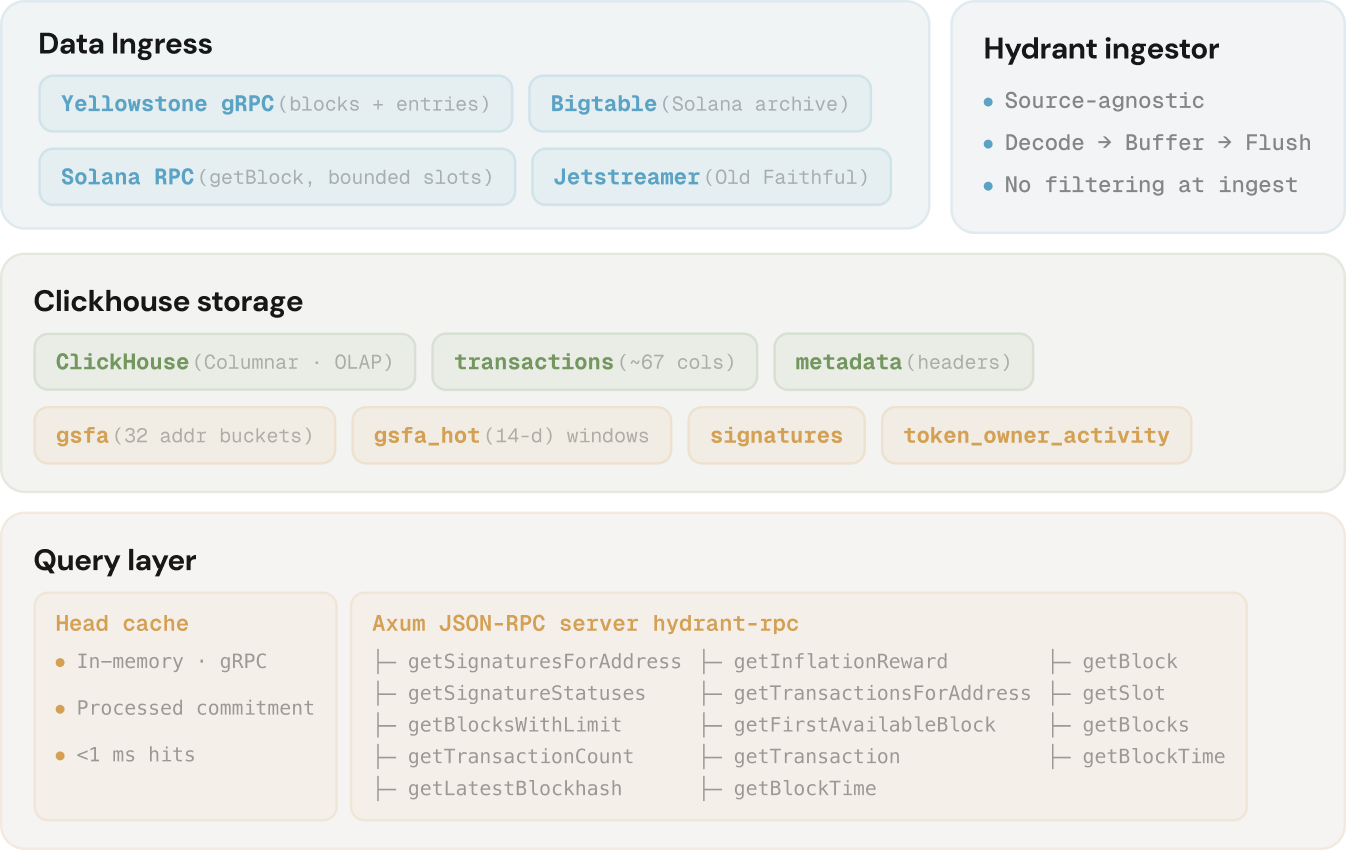

Hydrant has three layers working independently: an ingestion pipeline that gets data in, a ClickHouse storage layer that keeps it organised, and a query layer that serves it fast.

Ingestion

Hydrant's ingestion engine builds on Anza's Jetstreamer tool to replay the ledger into ClickHouse at high throughput. Once the backfill is complete, a long-running process keeps ClickHouse fresh from Solana tip via a Dragon's Mouth gRPC stream at confirmed commitment level. There's no filtering at the ingest layer: every block, every transaction, every entry goes in.

Storage

Data flows into three ClickHouse tables, partitioned by Solana epoch. As it's written, ClickHouse creates pre-sorted copies that match the most common query patterns out of the box. The older the epoch, the cheaper the storage tier, which is how we keep the full ledger economical to serve.

Query

The query layer takes your standard JSON-RPC requests and translates them into fast ClickHouse queries behind the scenes. From your side, nothing changes except the speed.

What it costs

Making Solana accessible has always been a priority for Triton, and Hydrant gave us the room to act on it when it comes to history.

The new architecture costs significantly less to operate, so every historical query is now priced the same as a standard RPC call, whether you're fetching a transaction from the latest slot or a block from the first epoch:

- $0.08 per GB of bandwidth

- $10 per million requests

Get started

If you're already a Triton customer, there's nothing to change. Some of your historical requests are already being served by Hydrant.

If you're new, getting self-onboarded takes only ~2 minutes:

Get an endpointFAQ

Does Hydrant replace Old Faithful? Yes. Hydrant is the successor to Old Faithful. Three methods are live today, and we're moving the rest over as we roll out more clusters.

Do I need to change my code? No. Hydrant serves the same standard Solana JSON-RPC methods with spec-compliant responses. Your existing integration works as-is.

Does all history cost the same? Yes. Every epoch from genesis to today is priced identically: $0.08 per GB of bandwidth plus $10 per million requests. There are no premium tiers for older data.

What about real-time data? Recent data comes back from Hydrant's head cache in milliseconds, but for real-time streaming, e.g. reacting to transactions as they happen, Triton has purpose-built tools: Deshred for the earliest signal, Dragon's Mouth for gRPC streams, Fumarole for persistent subscriptions, and Whirligig for frontend WebSockets

How far back does the data go? The complete Solana ledger from genesis. Every epoch, block, and transaction.

Will Hydrant be open-source? Yes. We're releasing Hydrant under AGPL as soon as all the optimisations are in place. You'll be able to run, audit, and extend it yourself.